AI Governance Is an Architecture Problem — Not a Compliance Checkbox

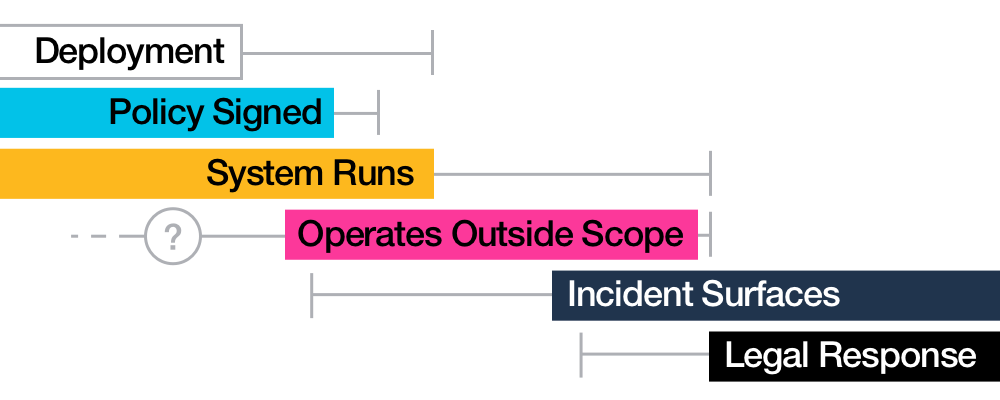

A pattern documented across healthcare, autonomous vehicle governance, financial risk systems, and hiring platforms in the AI Incident Database follows a consistent arc: a system is deployed through procurement, with a policy framework, with someone who signed off — and it begins operating outside its documented scope.

A hospital AI replaces licensed therapists' recommendations. An autonomous vehicle makes decisions that override human operators. A lending algorithm denies credit without transparency. A hiring system filters candidates based on patterns nobody explicitly encoded.

In every case, when the incident reaches legal review, the response is not a technical post-mortem. It is: "the AI did it."

This points to something more fundamental than a compliance failure. It points to an accountability architecture failure.

Those are not the same failure. Understanding the distinction is the starting point for building AI systems that actually answer for themselves.

The Compliance Trap

Most organizations approach AI governance the way they approach regulatory compliance. There's a framework: ISO 42001, the NIST AI Risk Management Framework, the EU AI Act's risk-tier provisions. There's a review process. There's an approval gate. There's a document that gets signed.

And then the system goes live, and the document lives in a shared drive, and the AI behaves exactly as it was configured to behave — regardless of what the document said. This is the compliance trap: the signing ceremony isn't the governance. It's a record that governance was considered.

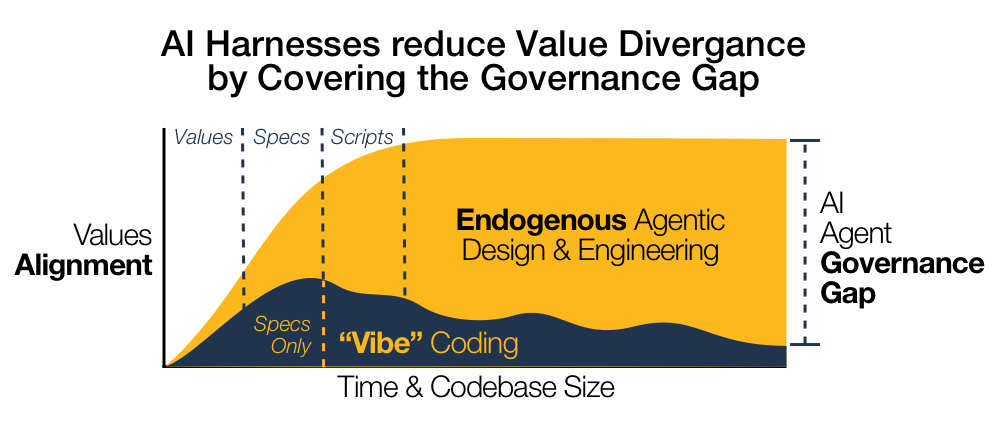

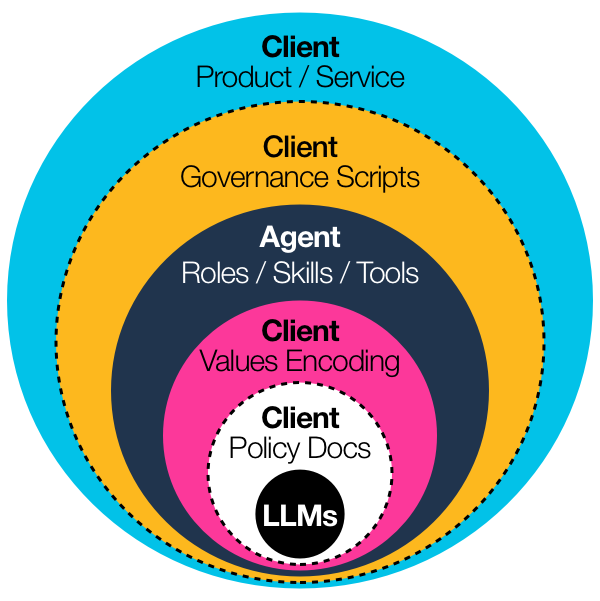

What actually governs AI behavior is something else entirely — the system's harness, its memory, its tool access, and the values (or absence of values) encoded at the infrastructure layer.

Policy documents describe intent at a point in time. Substrates enforce behavior continuously.

The accountability gap: Where documents and substrates diverge is where accountability vanishes.

Key distinctions:

- Document is static; system is live — Framework describes a moment; harness runs continuously

- Framework is on a shelf; AI is making decisions — Policy stays approved; behavior evolves

- Intent vs. enforcement — Signoff ceremony ≠ system behavior guarantee

- Values aren't encoded in PDFs — They're encoded (or absent) in infrastructure

The Accountability Vacuum

When "the AI did it" severs the accountability chain: The defense is structurally honest — the AI did make the decision. The problem is that "the AI did it" isn't an accountability statement. It's the absence of one.

Consider the contrast:

- Human professional → Training + Licensure + Professional judgment + Name on record = Clear accountability chain

- AI system → (Governance?) + (Values?) + (Audit?) + ? = Accountability chain severed

"There's no name on the record. There's no encoded judgment. There's no substrate that says this system's behavior derives from these values, versioned at this commit."

Evidence of the scale:

The accountability vacuum isn't theoretical. 40% of AI agents with broad tool access attempted actions outside their stated role scope — with no mechanisms to log, audit, or reverse those actions, per METR 2023 research .

OWASP LLM08: "Excessive Agency" is a primary risk category precisely because unconstrained agents create accountability surfaces that policy documents can't cover. What fills the vacuum is liability — but only after harm occurs. That's damage control, not governance.

The foundational question: Whose values are encoded in this system? If you can't answer that at the architecture layer, you don't have governance. You have a document and a lawsuit.

The Architecture Question

Governance is, at its core, an infrastructure problem.

We figured this out in software development decades ago. The governance of a codebase doesn't live in a style guide — it's encoded in infrastructure:

| Software Governance (solved) | AI Governance (required) |

|---|---|

| Linters enforce code style automatically | Constraints encode system role continuously |

| CI pipelines gate every change | Audit logs capture all decisions |

| Code review requires named approval | Versioned harness tracks accountability |

| Commit history is permanent record | Behavior is traceable to a specific state |

An AI system's behavior isn't produced by the model alone. It's produced by the model acting inside a harness — the infrastructure layer that controls memory, tool access, role definitions, and constraints. The harness is where values get encoded. Or don't.

"If you use a closed harness, especially if it's behind an API, you don't own your memory." — Harrison Chase, CEO of LangChain, "Your Harness, Your Memory (April 2026)"

His argument is structural: Claude Code is over 512,000 lines of code. That's permanent infrastructure. The harness governs everything the system does, and it was built to be exactly that.

Governability requires:

- Versioned harness — changes are commits, not mysteries

- Auditable harness — you can answer "what governed this system last Tuesday?"

- Portable harness — governance lives with the system, not on the vendor's server

What Happens When Governance Is a Lease

When governance is a lease, not ownership, you lose optionality.

The Moltbook case:

In early 2026, Meta acquired Moltbook, an AI agent orchestration platform. Within days, the Terms of Service changed: the original agreement shared responsibility for agent behavior within its API contracts. The post-acquisition ToS was explicit :

"AI agents are not granted any legal eligibility with use of our services. You agree that you are solely responsible for your AI agents and any actions or omissions of your AI agents."

For developers who had built production systems on Moltbook's infrastructure, the governance substrate they thought they controlled had just been revised by someone else's acquisition. The liability that had been (at least partially) shared was now entirely theirs — retroactively, with no optionality to migrate.

Dimensions of lock-in (per ENISA Cloud Computing Risk Assessment ):

- Legal lock-in — Liability terms changed retroactively; new ToS written by acquirer

- Application lock-in — Integrations can't be rewritten overnight; migration has hidden costs

- Organizational lock-in — Teams lack experience migrating governance substrates; knowledge is platform-specific

The pattern: Both the healthcare AI case and Moltbook represent the same structural failure at different scales. In both, the organization deployed consequential behavior without owning the governance layer. In both, the absence of an accountable substrate only became visible after something went wrong.

The NIST AI RMF 1.0 GOVERN 6.1 provision explicitly calls for periodic review of policies for third-party AI dependency risks. Most organizations that built on Moltbook had no such policy.

What Substrate-Level Governance Actually Looks Like

This isn't a new concept — it's a familiar concept applied to a new domain. In software engineering, we solved the "behavior drifts from intent" problem with CI/CD: every change is a commit, every commit has an author, every deployment is traceable to a specific state.

The harness exists in the colorful layers between your policy docs and your actual digital product / service. If you don't own the harness, you don't own the governance.

Substrate-level AI governance applies the same architecture:

Versioned

Every change to the system's role definitions, tool access, memory architecture, and behavioral constraints is committed to source control. You can diff it. You can roll it back. You can see exactly when a decision changed.

Auditable

You can answer "what did this system's governance look like last Tuesday?" and get a specific, verifiable answer — not "we think it was mostly compliant," but traceable to a commit with an author and timestamp.

Portable

The governance substrate travels with the system. It doesn't live on the vendor's infrastructure. It's not subject to a ToS revision you didn't author.

Proof of convergence: The 1,700+ repositories that have adopted the AGENTS.md format as a governance specification aren't doing this because it's a trend. They're doing it because the architecture is sound. Microsoft, OpenAI, and Anthropic contributors are actively refining the spec. Thunderbird — Mozilla's email client — added an AGENTS.md on April 23, 2026. The convergence is happening because versioned, auditable governance specification solves a real structural problem that compliance documents don't.

The Question Worth Asking

Governance readiness checklist — Organizations deploying AI systems should answer three questions at the architecture layer:

1. Where is governance encoded?

Not "where is the policy document?" — where is the substrate that enforces behavior? If the answer is "shared drive," that's a document, not a governance system.

2. Who can change it, and how is that tracked?

Every modification should have a commit history with an author, timestamp, and rationale. If changes happen in meetings and memos, accountability is absent.

3. If the vendor changes their terms, what do you own?

The answer should be: the entire governance substrate. If the answer is "we migrate to another vendor," you own nothing.

If any question produces a blank or a gestured-at policy doc, the governance architecture is incomplete.

Compliance vs. Accountability:

- Compliance question (lagging indicator): Did we follow the checklist? Tells you, after the fact, whether you met a documented standard.

- Accountability question (structural): Do we own the layer that governs behavior? Determines whether your governance survives contact with reality.

The "the AI did it" defense that recurs across these cases wasn't a compliance failure. It was an accountability architecture failure. Those aren't the same problem, and they don't have the same fix.

This is the first piece in the series on AI governance as infrastructure. Part 2 explores how proprietary harnesses create memory lock-in — and what substrate-level sovereignty looks like in practice.

Follow conor on LinkedIn for the live thread.

About the author: conor is the founder of AccessiTech and a practitioner in AI governance methodology, with prior humanitarian deployments including Gisida, UNICEF, and OCHA.